Google used its Cloud Next 2026 conference to deliver a clear message to enterprise customers and investors: the commercial future of artificial intelligence will be shaped less by standalone chatbots and more by agents that can execute tasks across software systems, data environments and workplace tools. In practical terms, that means Google is trying to move the market conversation from AI as a productivity add-on to AI as an enterprise control layer embedded in daily operations.

The emphasis is strategically significant for Alphabet because it arrives at a moment when the company is under pressure to show that its large investments in models, chips and data center infrastructure can produce repeatable enterprise spending. Consumer AI visibility has largely centered on search, mobile assistants and general-purpose chat tools, but the higher-value battleground for recurring revenue remains the enterprise, where software contracts are longer, switching costs are higher and platform decisions can reshape years of IT spending. At Cloud Next, Google’s pitch was that agents are the mechanism through which that monetization will happen.

Reuters reported that Alphabet executives presented AI agents as a lynchpin of the company’s strategy to make money from AI in business settings, with chief executive Sundar Pichai and Google Cloud leadership arguing that the market is moving out of the experimental phase and into broader production deployment. That framing matters because enterprise buyers have spent the last two years testing large language models in pilot projects while delaying wider rollout over concerns about accuracy, governance, security, integration complexity and unclear return on investment. Google is now trying to persuade customers that those barriers are becoming manageable, and that the time for scaled adoption has arrived.

At the center of the announcement set was a more unified enterprise AI platform built around Gemini Enterprise and agent development capabilities that Google described as a front door for organizations building, deploying and managing autonomous or semi-autonomous AI systems. The company’s event materials positioned this as an environment where businesses can create custom agents, connect them to internal data, govern their behavior and make them available across workflows. The value proposition is not simply that Google has strong models, but that it can package those models with the surrounding operational machinery enterprises need to trust and use them.

That packaging is essential because enterprise AI purchasing decisions are increasingly being made on system-level criteria. Companies want to know whether models can interoperate with existing infrastructure, whether they can maintain auditability, how permissions are enforced, and whether agents can act inside business applications without creating new security or compliance exposure. Google’s response at Cloud Next was to present AI agents as part of a broader stack rather than an isolated feature. By aligning model access, orchestration tools, governance layers and infrastructure under a single enterprise story, it is trying to reduce the perceived friction of deployment.

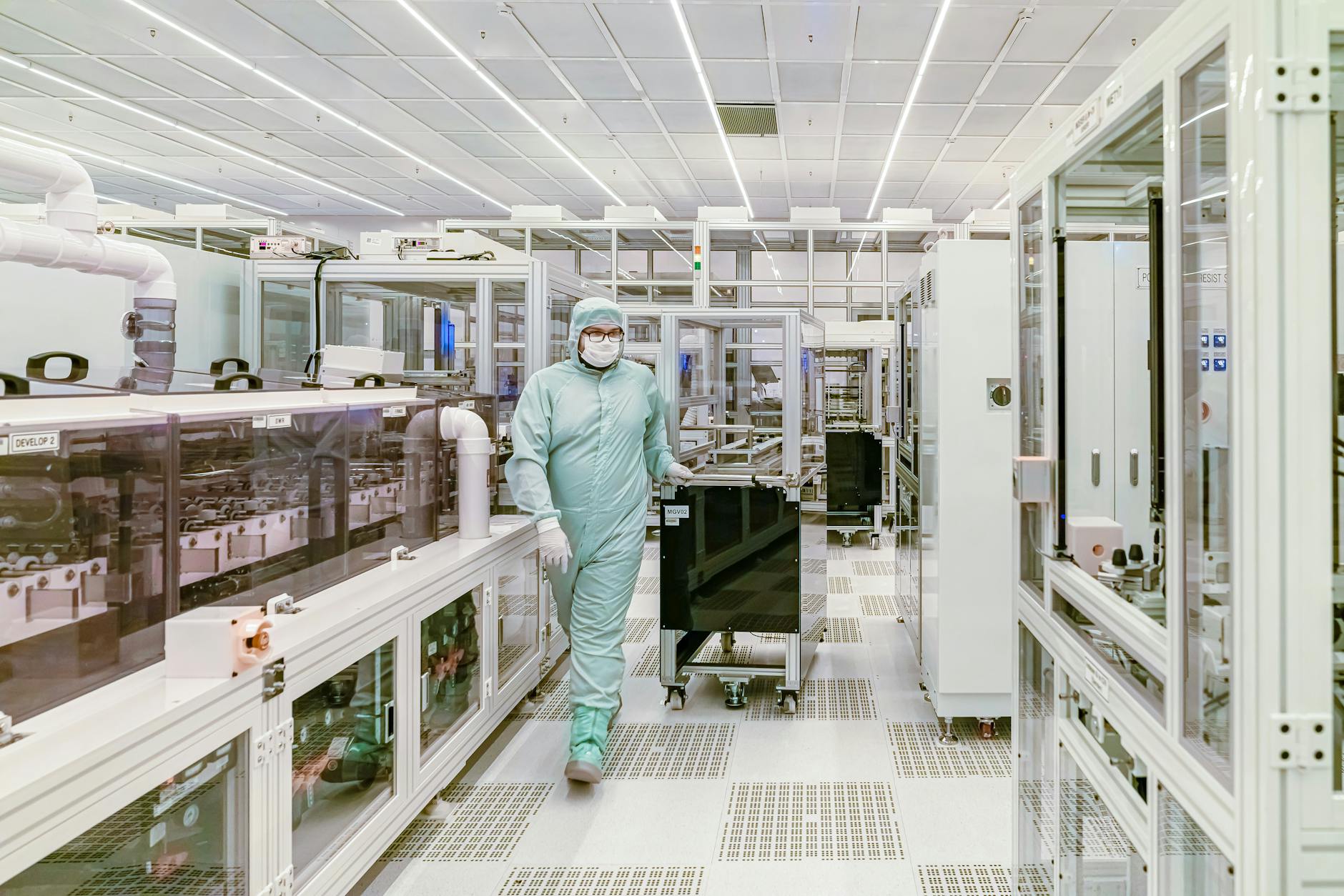

The infrastructure component of the strategy was prominent. Google highlighted new eighth-generation TPU offerings aimed at both training and inference, underscoring that its AI ambitions are backed by proprietary silicon rather than a pure dependence on external chip suppliers. That positioning has two commercial benefits. First, it reinforces the case that Google can optimize performance and cost across its own cloud environment. Second, it differentiates the company in a market where Nvidia remains dominant but hyperscalers increasingly want to show customers that they have credible in-house alternatives for specific AI workloads. For enterprises, the implied promise is that Google can offer a more integrated path from model development to production at scale.

Pichai also used the event to reiterate the scale of Alphabet’s infrastructure spending plans for 2026, with Reuters reporting planned investment of roughly $175 billion to $185 billion in computing infrastructure, with more than half tied to cloud-related machine learning. That figure is important because it signals that Google sees enterprise AI demand not as a narrow product cycle but as a long-duration platform buildout. It also indicates how expensive the race has become. AI leadership is now a capital-intensive contest that requires spending simultaneously on chips, servers, networking, power, data centers, software tooling and partner ecosystems. Cloud vendors that cannot fund that stack risk becoming dependent layers in someone else’s platform.

Google’s effort to turn agents into the organizing principle of its enterprise strategy also reflects the changing economics of generative AI. Early adoption centered on text generation, summarization and coding assistance, which were relatively easy to demonstrate but often harder to operationalize at scale. Agents raise the commercial stakes because they are designed not just to respond, but to act: querying systems, invoking tools, moving information between applications, surfacing recommendations and, in some cases, completing business processes with limited human supervision. If enterprises accept that model, then the winning vendor may be the one that governs workflows, not just the one that supplies the best standalone model.

That is where Google is trying to widen the field of competition. It is not fighting only Microsoft Azure or Amazon Web Services on raw infrastructure, nor only OpenAI and Anthropic on model quality. Instead, it is trying to occupy the middle and upper layers of enterprise AI at once. The company’s conference messaging suggested that Google sees value in being a multi-layer provider: models from Gemini, infrastructure from Google Cloud and TPUs, orchestration through enterprise AI platforms, and governance across the entire deployment lifecycle. The goal is to create a stack compelling enough that customers buy more of Google’s environment as they scale AI use.

This full-stack argument has gained credibility from Google Cloud’s recent market-share momentum. Reuters cited Synergy Research data showing Google’s global cloud market share reached 14% at the end of 2025, still behind Amazon and Microsoft but continuing to narrow the gap. That is a meaningful base from which to push new enterprise software and AI services. Google does not need to be the largest cloud platform to make the agent strategy work; it needs to convince CIOs and business leaders that its AI tools can improve productivity, accelerate automation and reduce friction in data-intensive operations. If those tools pull more workloads onto Google Cloud, the revenue effect compounds across infrastructure and software.

Cloud Next also showed how Google is trying to turn ecosystem alignment into a selling point. Enterprise customers rarely operate in a single-vendor environment, and one of the persistent challenges in AI rollouts has been stitching together data, governance and applications across multiple platforms. Google’s announcements and partner materials around the conference emphasized integrations with software vendors and implementation partners, including database and security relationships that can embed agentic AI into existing enterprise architectures. That matters because customers are more likely to adopt agents when they can fit into current environments rather than forcing wholesale migration.

Google’s partner push was not limited to interoperability. The company also announced a $750 million commitment to help partners develop and deploy agentic AI offerings, signaling that it wants a broader commercial channel around its enterprise AI stack. That is a classic enterprise software move. Platforms become durable not just through product launches, but through consultants, systems integrators, resellers and independent software vendors that package solutions for specific industries and functions. By subsidizing and accelerating that ecosystem, Google is trying to shorten the time between product announcement and real purchasing behavior.

Security and governance were another recurring theme, for good reason. AI agents introduce a different risk profile from ordinary copilots because they can be connected to live systems, internal data and external tools. An enterprise might accept some hallucination risk in a drafting assistant; it is far less likely to tolerate uncontrolled behavior in an agent acting across procurement, finance, support or engineering environments. Google’s positioning at Cloud Next reflected that reality, stressing oversight mechanisms, administrative control and policy management as part of the product story rather than afterthoughts. The company appears to understand that in enterprise AI, trust features are now revenue features.

That emphasis also responds directly to competitive pressure. Microsoft has leaned on its enterprise relationships, security posture and integration into workplace software to push Copilot deeper into corporate accounts. Amazon has focused on flexibility, model choice and infrastructure scale through AWS. OpenAI and Anthropic, meanwhile, are pushing increasingly capable tools that can move into programming, analysis and workflow execution. Google needs a narrative that distinguishes it from all three camps. Its answer is that the company can offer the broadest integrated path from chip to model to agent to governance, while still supporting multi-model enterprise environments where customers may want access to third-party systems alongside Google’s own.

The conference also revealed something about how Google thinks the enterprise market is evolving. For several quarters, cloud providers have framed generative AI as a source of incremental usage and experimentation. At Cloud Next 2026, Google sounded more confident that the market is beginning to standardize around production architectures. Kurian’s comments, as reported by Reuters, underscored the notion that the experimentation phase is giving way to growth. If that is correct, then buying decisions will increasingly hinge on operational maturity, pricing discipline and deployment speed. Under those conditions, AI agents are attractive because they can be sold not only as technology, but as measurable business process change.

There is, however, a substantial execution burden behind the ambition. Enterprises still need clearer proof that agents can deliver reliable returns without creating new cost and control problems. The technology itself remains uneven across tasks, and many organizations are still determining where human review must remain in the loop. The economics are also unsettled. Richer agentic workflows can generate more consumption of models and infrastructure, which benefits cloud vendors, but they also create pressure to show meaningful efficiency gains for customers. Google will have to persuade buyers that its integrated stack lowers total complexity and cost enough to justify wider deployment.

Investors are likely to evaluate the strategy on three fronts. The first is cloud revenue acceleration: whether AI products help Google Cloud sustain or improve growth versus major rivals. The second is monetization mix: whether enterprise AI contributes higher-value software and platform revenue rather than remaining mostly a driver of compute demand. The third is capital efficiency: whether Alphabet’s enormous infrastructure spending produces durable competitive advantage rather than merely keeping pace with an industry arms race. Cloud Next did not resolve those questions, but it did clarify the company’s answer. Google is betting that AI agents will be the commercial layer that links all of those pieces together.

For the broader tech sector, the significance of the conference is that it sharpens the next phase of the enterprise AI market. The debate is no longer simply about who has the best model benchmark or the flashiest demonstration. It is about who can persuade companies to rewire work around AI systems that do not just generate content, but participate in execution. By making agents the centerpiece of Cloud Next 2026, Google has declared that this is the category where it intends to compete most aggressively.

Whether that strategy succeeds will depend on adoption more than applause. Product launches at industry conferences can set a narrative, but enterprise spending follows evidence, integration and trust. Google’s task now is to turn the vision of the “agentic enterprise” into contracts, migrations and embedded usage across large organizations. If it can do that, Cloud Next 2026 may be remembered not just as another showcase of AI ambition, but as the point where Google tried to convert its AI research and infrastructure strengths into a more defensible enterprise software position.