Microsoft has unveiled a new generation of enterprise artificial intelligence chips, sharpening its effort to reduce reliance on Nvidia while giving Azure customers a more tightly integrated compute platform for large-scale AI workloads.

The launch, announced on April 25, places Microsoft more directly into the custom silicon race now reshaping the economics of cloud computing. The company is seeking to use internally designed accelerators to lower the cost of running AI models, improve infrastructure availability and capture more of the value chain behind enterprise AI adoption.

For Microsoft, the strategic logic is clear. Demand for AI compute has surged faster than the supply of high-end accelerators, and Nvidia’s graphics processing units remain the industry’s preferred hardware for training and running advanced models. That dominance has helped Nvidia become one of the most valuable companies in global markets, but it has also left cloud providers exposed to supply bottlenecks, procurement costs and dependence on a single external platform.

Microsoft’s answer is not to replace Nvidia overnight, but to build a broader silicon portfolio that can support selected workloads inside its own cloud. The company’s new chips are expected to be aimed primarily at enterprise deployment patterns, where performance per dollar, power efficiency, model-serving latency and software integration can matter as much as raw benchmark leadership.

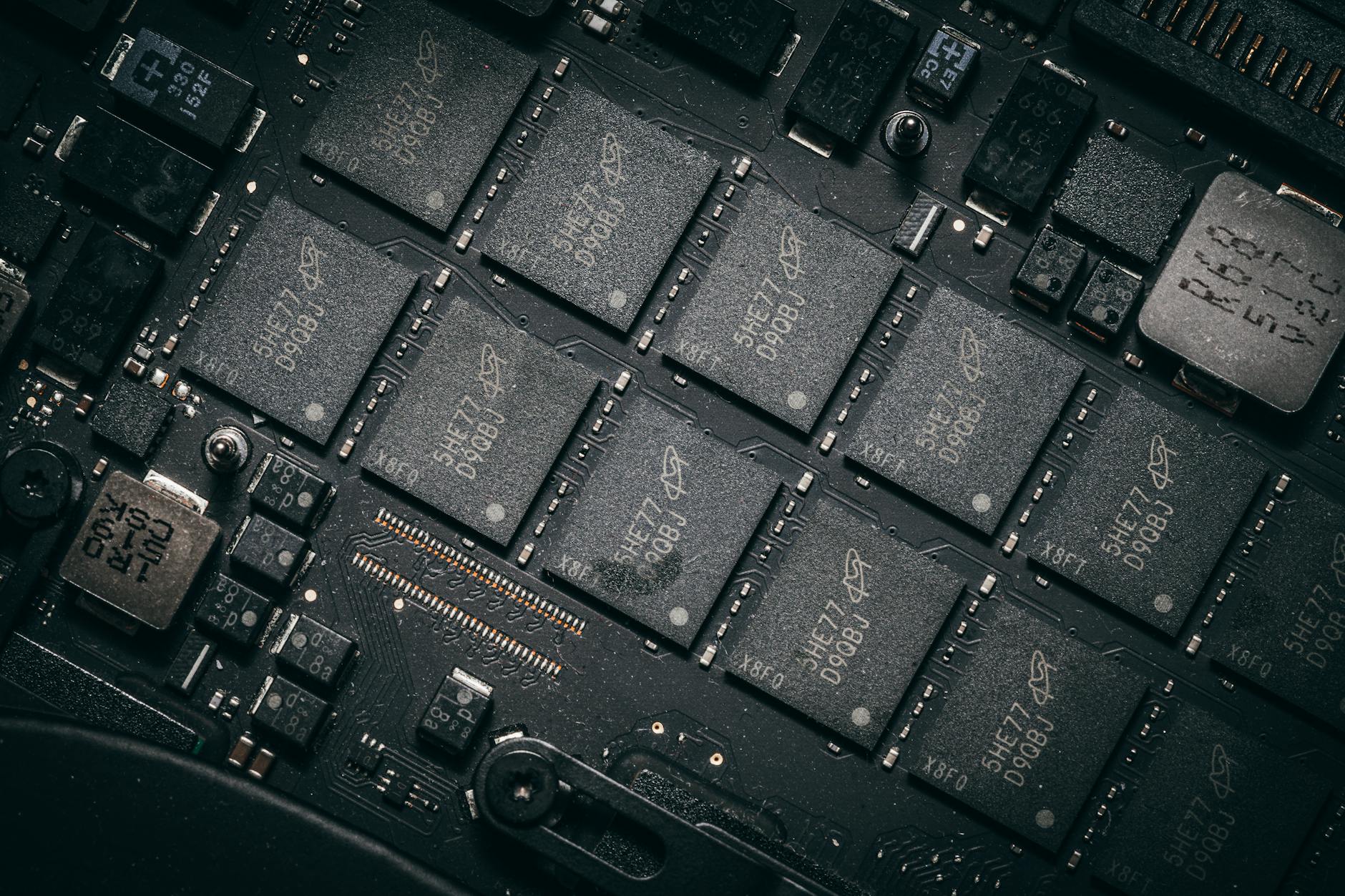

The latest rollout builds on Microsoft’s Maia accelerator strategy, which the company has used to support AI infrastructure inside Azure. Earlier this year, Microsoft introduced the Maia 200, a second-generation AI accelerator intended to improve inference performance and give developers more control over how AI workloads run across Microsoft’s data-center fleet. Reuters reported in January that Microsoft was pairing Maia 200 with new software tools, including Triton-based development work, as part of a broader effort to challenge Nvidia’s hardware and software ecosystem.

The April announcement signals that Microsoft wants its chip program to move beyond internal experimentation and become a more visible element of its enterprise cloud pitch. In practice, that means making custom silicon part of a broader package: Azure infrastructure, Microsoft 365 Copilot, GitHub Copilot, model hosting, security tooling and enterprise AI governance.

That integration matters because corporate AI buyers are increasingly focused on total deployment cost rather than the headline capabilities of individual models. Many companies have moved beyond pilot projects and are now evaluating whether AI agents, coding assistants, customer service automation and internal workflow tools can be scaled economically. Hardware costs sit at the center of that calculation.

If Microsoft can run more inference workloads on its own chips, it may be able to improve margins inside Azure while offering customers more predictable capacity. Inference, the process of running trained AI models to generate outputs, is expected to represent a growing share of AI compute demand as enterprise usage increases. Training large models remains expensive and technically demanding, but repeated model execution across millions of users can become an even larger long-term infrastructure burden.

The chip announcement also reflects a broader industry turn toward vertical integration. Google has long invested in tensor processing units for its cloud and internal AI operations. Amazon Web Services has developed Trainium and Inferentia chips. Meta, Tesla and other large technology companies are also working on specialized silicon to support their own AI ambitions. The common objective is to reduce dependence on external suppliers, optimize hardware for proprietary workloads and improve control over infrastructure roadmaps.

Microsoft’s challenge is that Nvidia’s advantage extends beyond chips. Nvidia’s CUDA software ecosystem, developer familiarity, networking technology and system-level integration have made its platform difficult to displace. Large AI labs and enterprise developers often build around Nvidia because the software stack is mature, widely supported and deeply embedded in AI engineering workflows.

That is why Microsoft’s silicon strategy must be judged not only by transistor performance, but also by software compatibility. A custom accelerator with limited developer support could remain a narrow internal tool. A chip supported by usable compilers, libraries, orchestration systems and migration pathways could become a meaningful enterprise alternative for selected workloads.

Microsoft appears to be pursuing the second path. By aligning its chips with Azure’s AI services and developer tools, the company is attempting to reduce the friction customers face when moving workloads across hardware types. The objective is not necessarily to convince enterprises to choose chips directly, but to make the underlying hardware less visible while improving cost and performance inside managed cloud services.

The move comes at a time when capital spending across the AI sector remains elevated. Microsoft, Alphabet, Amazon and Meta have all been expanding data-center capacity to support generative AI demand. Reuters reported this week that Microsoft plans a major AI and cloud investment in Australia, with the company aiming to expand local cloud and AI capacity significantly by 2029. That spending underscores the scale of infrastructure required to support AI applications across regions and regulated industries.

Investors are watching closely because AI infrastructure has become both a growth driver and a margin risk for Big Tech. Microsoft’s cloud business has benefited from AI demand, but the company must continue spending heavily on servers, networking, power and data centers. Custom chips could help improve the return on that spending if they reduce per-query costs or increase the amount of AI capacity Microsoft can deliver from each data-center buildout.

The competitive implications for Nvidia are nuanced. Microsoft remains a major Nvidia customer and is unlikely to stop buying Nvidia GPUs for leading-edge training clusters or broad customer demand. Nvidia’s newest systems remain central to the market for frontier AI development. But every successful custom chip deployment by a hyperscaler reduces the share of future AI workloads that must run on Nvidia hardware.

That makes Microsoft’s announcement part of a gradual diversification trend rather than a single disruptive event. The AI hardware market is expanding rapidly enough that Nvidia can continue growing even as customers add alternative chips. But hyperscaler silicon programs may cap Nvidia’s long-term pricing power in cloud inference, especially for mature workloads that can be optimized on proprietary accelerators.

For enterprise customers, the near-term effects may be practical rather than dramatic. Companies using Azure AI services could eventually see broader availability of lower-cost compute options, better regional capacity and improved performance for Microsoft-optimized models. Regulated industries may also benefit if custom infrastructure helps Microsoft offer more controlled deployment environments for sensitive workloads.

Still, execution risks remain. Designing advanced AI chips is technically complex, and production depends on sophisticated manufacturing supply chains dominated by Taiwan Semiconductor Manufacturing Co. and a limited number of packaging and memory suppliers. Even when chips are successfully designed, scaling them across global data centers requires extensive validation, power planning, cooling infrastructure and software tuning.

Microsoft also faces timing pressure. AI model architectures are evolving quickly, and hardware roadmaps must anticipate future workloads years before they reach full deployment. A chip optimized for one generation of models can lose relevance if customer demand shifts toward different memory, networking or inference requirements. Nvidia’s advantage has partly come from its ability to refresh systems rapidly while supporting a wide range of AI workloads.

The broader market context is also changing. Google’s recent enterprise AI announcements showed how aggressively cloud rivals are packaging custom chips with AI platforms, agents and model services. Reuters reported this week that Google placed AI agents at the center of its enterprise monetization strategy while introducing new TPU chips for training and inference. That puts pressure on Microsoft to show that its own AI stack can compete not only at the application layer, but also in the infrastructure layer beneath it.

Amazon is pursuing a similar approach through AWS, where Trainium and Inferentia are positioned as lower-cost options for AI workloads. The result is a cloud market in which chip strategy is increasingly tied to enterprise software strategy. Providers are no longer competing only on storage, compute and developer tools; they are competing on the full economics of AI deployment.

Microsoft’s advantage is its enterprise distribution. The company already has deep relationships with corporate IT departments through Windows, Office, Teams, Azure, Dynamics, GitHub and security products. If it can embed custom-chip economics into those services, it may not need customers to think about silicon at all. The chips can become an invisible lever behind pricing, performance and product availability.

That approach could be especially relevant for AI agents, which require repeated model calls, data retrieval and task execution across business systems. As agentic AI moves from demonstration to deployment, infrastructure costs may rise sharply. A more efficient inference platform could make the difference between a high-cost productivity experiment and a scalable enterprise product.

Microsoft’s new chip rollout also strengthens its negotiating position with suppliers. Even partial substitution can give a large buyer more leverage in procurement discussions. Nvidia will remain essential, but Microsoft’s ability to route some workloads to internal silicon may improve supply flexibility and reduce exposure to price spikes during periods of intense demand.

The announcement is therefore best understood as a strategic infrastructure move. It does not end Nvidia’s dominance, and it does not guarantee that Microsoft will become a leading merchant chip designer. Instead, it shows that Microsoft sees custom silicon as a necessary component of cloud competitiveness in the AI era.

For the technology sector, the message is that the AI race is moving deeper into the stack. Models, applications and agents remain the visible layer, but the economics will increasingly be decided by chips, data-center design, software runtimes and power availability. Microsoft’s enterprise AI chip push is another sign that the largest cloud providers are building not just AI products, but AI factories.

The market will now look for evidence of deployment scale, customer availability and measurable cost improvements. Until those details become clear, the announcement is a statement of intent as much as a product milestone. But in a market where AI infrastructure capacity has become a strategic asset, Microsoft’s move reinforces the view that Big Tech’s next phase of competition will be fought as much in silicon as in software.