Taiwan Semiconductor Manufacturing Co. has warned that supply of its most advanced chips is set to remain tight as artificial intelligence demand continues to run ahead of capacity, reinforcing the company’s position as both the chief beneficiary and a critical bottleneck in the global AI infrastructure buildout.

The warning, reported by the Financial Times and echoed by recent company disclosures, comes as the world’s largest contract chipmaker accelerates investment in leading-edge manufacturing nodes and advanced packaging. The pressure is concentrated in the highest-value parts of the semiconductor supply chain: advanced logic processes used for AI accelerators and packaging technologies that combine large compute dies with high-bandwidth memory.

For cloud companies, chip designers and enterprise technology buyers, the message is straightforward. Even as TSMC raises capital spending and expands manufacturing footprints, the industry’s most advanced AI processors are likely to remain subject to allocation, long planning cycles and elevated pricing power for the foundry. That dynamic could affect the timing of new AI server deployments, the cadence of product launches from major chip vendors and the cost structure behind cloud AI services.

TSMC’s latest comments follow a strong first quarter in which the company said its business was supported by demand for leading-edge process technologies. In its April 16 results release, the company said it expected second-quarter performance to continue benefiting from strong demand for those technologies. The earnings backdrop gives TSMC unusual visibility into customer orders but also raises pressure on management to expand supply without overbuilding into a cyclical downturn.

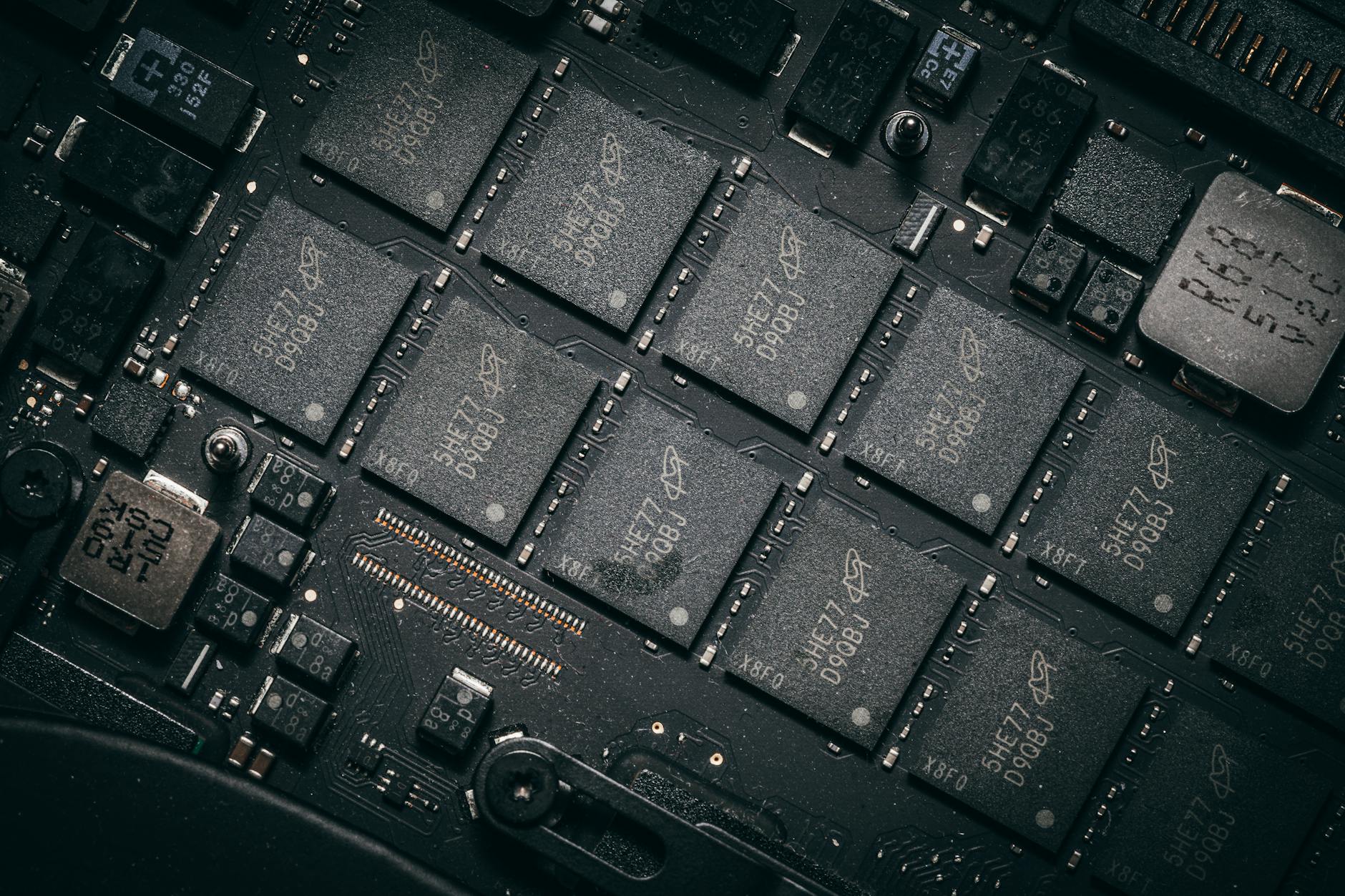

The constraint is not only about wafer starts. AI processors require an increasingly complex combination of advanced logic, high-bandwidth memory and packaging integration. TSMC’s Chip on Wafer on Substrate, or CoWoS, platform has become a crucial technology for assembling high-performance AI accelerators. Without sufficient packaging capacity, additional wafer output alone may not translate into finished chips that hyperscale customers can install in data centers.

TSMC used its 2026 North America Technology Symposium to highlight that packaging challenge. The company said it is continuing to expand CoWoS technology to support AI demand for more computing power and memory in a single package. It is producing 5.5-reticle-size CoWoS and plans a 14-reticle-size version capable of integrating roughly 10 large compute dies and 20 high-bandwidth memory stacks in 2028, followed by even larger versions in 2029.

That roadmap reflects the way AI computing is changing semiconductor manufacturing. Customers are no longer buying only smaller transistors. They are demanding full systems-level scaling, where performance gains depend on logic density, memory bandwidth, interconnect architecture, power delivery and packaging area. TSMC’s ability to offer that stack is why its capacity outlook matters well beyond Taiwan’s manufacturing sector.

For Nvidia, AMD, Broadcom and other AI chip designers, TSMC’s advanced process and packaging capacity has become a strategic resource. Customers typically book capacity years in advance, particularly for high-performance computing products that require long design cycles and large wafer commitments. When demand accelerates faster than expected, the market can tighten quickly because new fabs, tools and packaging lines take years to qualify at scale.

The supply warning also lands as global technology companies continue to raise AI infrastructure spending. Major cloud providers are committing tens of billions of dollars to data centers, servers, networking equipment and custom accelerators. That spending wave has improved visibility for TSMC and other semiconductor suppliers, but it has also made the industry more sensitive to any delay in the manufacturing chain.

TSMC has been responding with higher capital expenditure and a broader global capacity plan. In its first-quarter earnings materials, the company said it was stepping up investment to increase 3-nanometer capacity to meet strong AI demand. It also said it was executing a global capacity plan to support a multiyear pipeline of demand for 3-nanometer technologies, one of the key manufacturing platforms for current and next-generation AI chips.

The company’s international expansion is another part of the supply response. Reuters reported on April 22 that TSMC plans to open a chip packaging plant in Arizona by 2029, an important move because advanced chips produced in the United States have often still required packaging in Taiwan. A local packaging capability would help close part of that loop, though it will not immediately resolve the near-term imbalance in AI chip supply.

The Arizona buildout also highlights the geopolitical dimension of advanced chip capacity. The United States, Japan and Europe have all pushed to localize parts of the semiconductor supply chain after pandemic-era shortages and rising security concerns around Taiwan. TSMC’s overseas facilities may improve resilience over time, but advanced manufacturing remains difficult to duplicate quickly because it depends on equipment, materials, engineering talent and tightly controlled process know-how.

For investors, tight supply has two opposing implications. On one side, it supports TSMC’s revenue visibility, utilization rates and negotiating leverage with large customers. Scarcity in advanced nodes and packaging can sustain premium pricing and justify elevated capital expenditure. On the other side, capacity shortages could cap near-term volume growth if customers cannot secure enough finished chips to meet demand.

The risk for the broader AI trade is that supply tightness could slow deployment schedules. Cloud providers have been racing to add capacity for training and inference workloads, while enterprises are moving from pilot projects toward production AI systems. If accelerator availability becomes a limiting factor, some revenue recognition across cloud, server, networking and software ecosystems could shift across quarters.

Still, the current shortage differs from earlier semiconductor cycles. Previous crunches often centered on autos, consumer electronics or mature-node components. The present constraint is concentrated in the most advanced parts of the market, where demand is driven by AI infrastructure and where only a small number of suppliers can meet technical requirements. That makes the bottleneck more strategic and less easily solved by shifting orders to second-tier capacity.

Competitors are trying to narrow the gap. Intel is seeking to expand its foundry business, Samsung remains a major advanced logic and memory producer, and packaging suppliers are increasing investment in high-end assembly. But TSMC retains a dominant position in outsourced leading-edge manufacturing, and its execution track record keeps it embedded in the product roadmaps of the largest AI chip customers.

Equipment suppliers are also part of the equation. ASML, the key provider of advanced lithography systems used in leading-edge chipmaking, said this week that it does not expect to become the industry’s bottleneck, according to Reuters. That assurance matters because shortages of lithography tools would further complicate foundry capacity expansion. Even so, avoiding one bottleneck does not eliminate constraints in packaging, substrates, high-bandwidth memory or qualified manufacturing lines.

TSMC’s technology roadmap shows how far ahead customer planning must extend. The company has outlined future process nodes and packaging platforms through the end of the decade, with AI and high-performance computing becoming central design targets. As chips grow larger and more integrated, each generation requires more coordination among foundries, memory suppliers, substrate makers, equipment vendors and hyperscale customers.

The commercial effect is likely to be a more disciplined allocation market. Customers that can commit early, provide demand visibility and pay for capacity assurance may be better positioned than smaller buyers relying on spot availability. That could reinforce the advantage of the largest cloud platforms and chip designers, while making it harder for emerging AI hardware companies to secure sufficient supply at competitive cost.

TSMC’s warning also complicates assumptions about AI deflation. Many technology companies expect model training and inference costs to decline over time as chips become more powerful and software becomes more efficient. But if the most capable chips remain scarce, cost reductions may come more slowly. Capacity additions can improve supply, but the capital intensity of each new generation means lower unit costs are not guaranteed in the near term.

For TSMC, the challenge is to expand aggressively while preserving returns. Semiconductor demand remains cyclical, and management must avoid adding too much capacity for products that could mature or shift. Yet AI demand has already proven stronger and more durable than many earlier forecasts, giving the company an unusually strong basis for long-term investment.

The next indicators will be customer commitments, capital expenditure guidance and progress on advanced packaging expansion. Investors will also watch whether AI chip lead times stabilize or continue to stretch, and whether cloud providers adjust deployment schedules around supply availability. Any sign that TSMC can relieve the bottleneck faster than expected would support the AI hardware supply chain. Any further tightening would amplify concerns that compute availability, not software ambition, is becoming the limiting factor for the next stage of AI growth.

For now, the foundry’s message is that demand remains ahead of supply at the frontier of chipmaking. That is favorable for TSMC’s strategic position, but it also confirms that the AI boom is increasingly constrained by physical manufacturing capacity, not only by algorithms, capital budgets or customer appetite.